How MSPs can use AI to recruit fast and hire right: Part 2

AI might be making some business processes move faster, like recruiting candidates and writing job descriptions, but it’s creating some rocky terrain in the hiring landscape as well.

The first installment of our AI in hiring series covered the current state of the hiring landscape and the AI doom cycle. In this installment, we’ll take a closer look at the key issues MSPs need to be aware of as they incorporate AI tools into their hiring process, and how they can guide customers who are also looking to adopt AI solutions in HR. These issues include: the rise of fraudulent applications, the legal risk associated with AI candidate filtration, and why HR professionals are so resistant to integrating identity verification into the hiring pipeline. Here’s what you need to know.

Fraudulent Applications on the Rise

There is a growing concern among hiring professionals that the candidates they are interviewing are fraudulent. Hiring platform Greenhouse’s 2025 AI in Hiring report, which surveyed over 4,100 job seekers, hiring managers, and recruiters, found that 74% of hiring managers say they’re more concerned about fake credentials, deepfakes, or misrepresented experience than they were a year ago.

The report also found that 91% of U.S. hiring managers have caught or suspected AI-driven candidate misrepresentation. The most common candidate fraud tactics in the U.S. are fake backgrounds or voice changers (32%), AI scripts during interviews (32%), and deepfakes (18%).

The Risk Factor

But what’s at risk here isn’t just the wasted time, effort, and resources put into a bad-fit candidate. “People have been hiring fake people. They get fired within a month or two; that costs a lot of money,” says a cybersecurity sector recruiter who asked to remain anonymous. “But not only that, I found out one company got a person from North Korea in this way—and the guy stole $51 million worth of data.”

“If you get one [malicious actor] in your company who is good at an IT level, they can destroy your company in a minute,” the cybersecurity recruiter explains. “You’ve just opened your system to somebody you don’t know, who could be sending all your data back to another country, stealing social security numbers. You’re giving them all the data they need to cause fraud at a high level.”

In her breakout session at Gartner’s Identity and Access Management summit in December, Emi Chiba, HR tech analyst at Gartner, spoke on the increasing risk. “By 2028, we expect 1 in 4 candidate profiles worldwide to be fake,” she said. “Hiring fraudulent candidates opens you and your organization to a wide range of risk—cybersecurity, legal, and financial risks. If you give malicious actors employee access, they can steal your data; they can steal your intellectual property.”

Red Flags of Fraud

Employers are finding ways to identify fraudulent applications, largely by cross-checking data. But be warned, as AI continues to get more sophisticated, employers may be forced to find other identifiers in the future.

A common red flag is “overfitting,” says the cybersecurity recruiter. “Anytime you see somebody who’s previous job is perfectly aligned with your job description, like a 90% match, it’s an overfitting. I use AI to identify the overfitters, because they’re having their resumes written by AI. I can’t tell what their real experience is because of that.”

It’s not just that a candidate’s work history matches your job description too closely though, says the cybersecurity recruiter. “AI overfits, but it may only be the last job that they had that overfits. The rest of the history before doesn’t seem to be related, or it’s not as significant, but their last job of two years is perfect. That’s not normal. People don’t usually work that way.”

Another sign of a fraudulent applicant is an oddly short amount of time on LinkedIn. “If you look on LinkedIn and the guy has only been on LinkedIn for a month or two… that’s not normal for somebody who’s been in the industry for 10 years. They’re going to have a LinkedIn profile from years ago,” the recruiter says.

Other indicators include fictional companies, real companies that didn’t exist yet when the candidate apparently worked there, LinkedIn profiles that don’t match resume experience, and multiple, different LinkedIn profiles of the same person.

“I imagine recruiters are going to have to start researching companies,” the recruiter says. “AI is going to help find the fraud, but AI is creating the fraud.”

Beware of Hiring Bias

While these indicators can help you pick out fake applicants, Chiba warned attendees against one frequent piece of advice in particular: “that you should stop hiring people who don’t look like they match their name.” Not only is a name not a good indicator of fraud, but as Chiba points out, it is “wildly illegal.”

Anti-discrimination laws protected by the Equal Employment Opportunity Commission (EEOC) prohibit eliminating a candidate based on race, national origin, sex, age, and gender identity—all things that could be inferred based on a candidate’s name.

But human bias isn’t the only factor that could get you in trouble with the EEOC. AI has demonstrated bias of its own before, and if you’re not paying attention to how it’s filtering candidates, you could be liable.

California’s amendments to the Fair Housing and Employment Act earlier this year are a great example of this; there are strict guidelines regulating AI’s candidate filtration, including requiring evidence of AI anti-bias testing to avoid unlawful discrimination, and mandating employer record-keeping of automated decision-making systems’ data for at least four years. These requirements also prohibit algorithms that have a “disparate impact” on a protected group—meaning that even if an employer isn’t intentionally using AI to discriminate amongst candidates, they could be liable for using an algorithm proven to have an adverse impact on a protected class.

And California’s regulations are only the beginning. Legislature concerning AI in hiring is being passed at the state and federal level, meaning it’s more important than ever that employers using AI for candidate filtration keep a sharp eye on their algorithms.

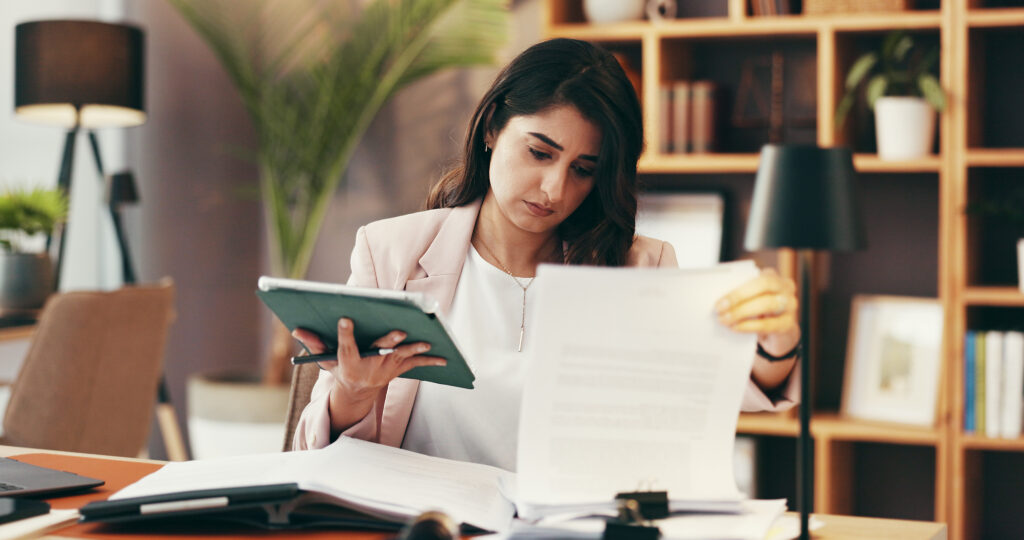

Expect Wary HR Professionals

This concern over bias is exactly why HR professionals are so careful to minimize their own biases by keeping protected information at arm’s length. While identity verification early in the hiring process may be the answer to the problem of fraudulent applicants (more on that next time), MSPs will likely meet pushback from HR professionals when proposing that idea.

“Because,” Chiba said, “when you bring an identity document into this process, those identity documents can also tell whether someone is part of a protected class.”

“Traditionally, the mandate has been to keep any of that identifying information as far away from the decision-making process as possible,” she says. “Though, it can also work in your favor” as an MSP. “I’ve spoken to HR leaders who say they’re not in the business of checking people’s identification before making an offer to employees. But having a vendor to do that takes the onus off of HR.” Partnering with your MSP clients’ HR departments to securely verify the identities of their candidates—without compromising the hiring manager’s integrity—could be both a revenue opportunity and solution to this issue, though Chiba does acknowledge that data security and limited storage of privileged information would be key.

In Risk Lies Opportunity

The hiring landscape, now radically changed by AI, is full of risk, both from fraud and running afoul of legislation. But mitigating fraud is an MSP’s bread and butter, and the MSPs who adapt quickly for their own hiring needs—and can pass those mitigation strategies on to their clients—stand poised to support HR departments as a trusted advisor and essential part of the new hiring process.

In the third and final installment of our AI in hiring series, we’ll cover Chiba’s assessment of the least disruptive, yet thorough, way to integrate identity verification into the hiring process, the importance of the human element, and ways MSPs can automate their hiring processes without legal risk.

In the meantime, check out how MSPs like you see AI reshaping their business in 2026.

Power Your MSP Success with the Kaseya Community

Get fast answers, share product ideas, access exclusive resources, stay current on Kaseya product innovations, and connect with MSP peers.

2026 Kaseya State of the MSP Report

Get 2026 MSP insights from 1,000 plus providers and learn how to grow revenue, adapt to market pressure, and stay competitive.

Get More MSP Insights

Join 50,000+ MSP professionals receiving expert insights, best practices, and industry trends.